日本語版はこちら

Per-Second Billing for EC2 Instances has begun. This is good news for AWS users who want to execute multiple jobs simultaneously.

EC2 was hourly-pricing before that. Please imagine the following two cases:

(1) Use one EC2 instance for 50 min

(2) Use five EC2 instances for 10 min

Under the hourly-blling, Case (2) costs five times compared with Case (1). Now the cost is same in these cases.

Per-second billing is also applied to AWS Batch. In this article, a number of CFD (Computational Fluid Dynamics) jobs are executed with AWS Batch. The procedure below is based on the hands-on in JAWS-UG HPC. A usage fee for the jobs is also estimated at the end of this article.

Acknowledgement: Thank for porcaro33, the creator of the original hands-on, to allowing to publish this article

Overwiew

The same network architecture as the one used in the original hands-on is employed.

By following the procedure below to build the environment with CloudFormation, an EC2 instance maned "Ubuntu Bastion" is launched.

Files to be used are pre-loaded to the instance. You only have to edit some files and build Docker Container.

By executing the pre-loaded Python script, jobs are submitted to AWS Batch and executed Auto-Scaled EC2 instances.

OpenFOAM is introduced as the software to perform CFD.

pitzDaily, one of OpenFOAM tutorials, is calculated 720 times while varying boundary condition of incoming flow.

Velocity components for x, y and z directions are varied within a defined range.

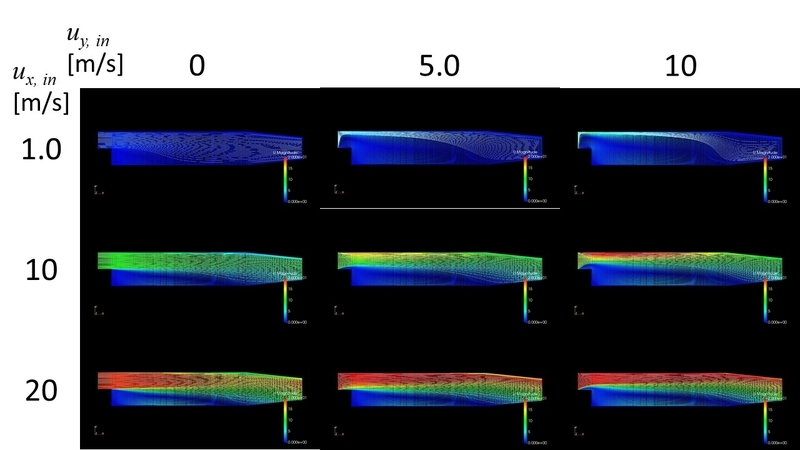

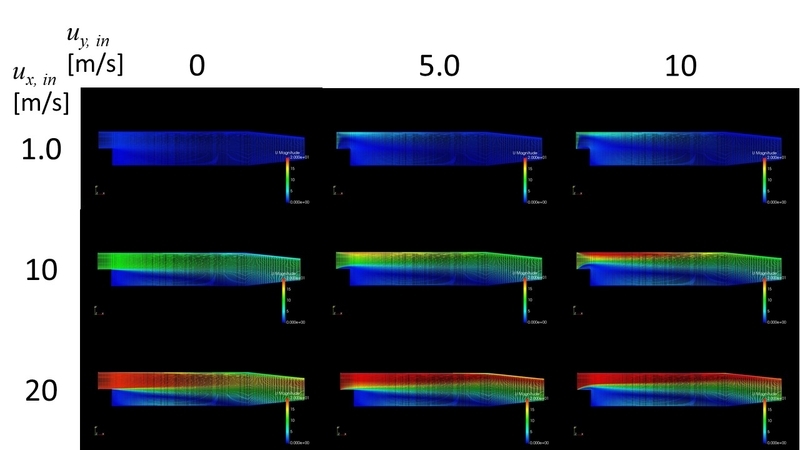

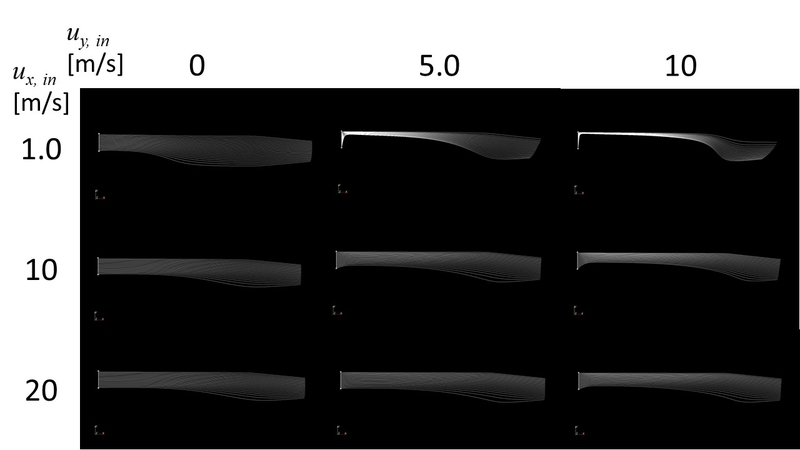

Following figures show examples of the calculation results visualized with paraFoam.

The contor indicates velocity magnitude, and the white line indicates StreamTracer.

The contor indicates velocity magnitude, and the white line indicates StreamTracer.

Only the contor for the velocity magnitude is shown.

Only the contor for the velocity magnitude is shown.

Only the white line for StreamTracer is shown.

Only the white line for StreamTracer is shown.

Procedure

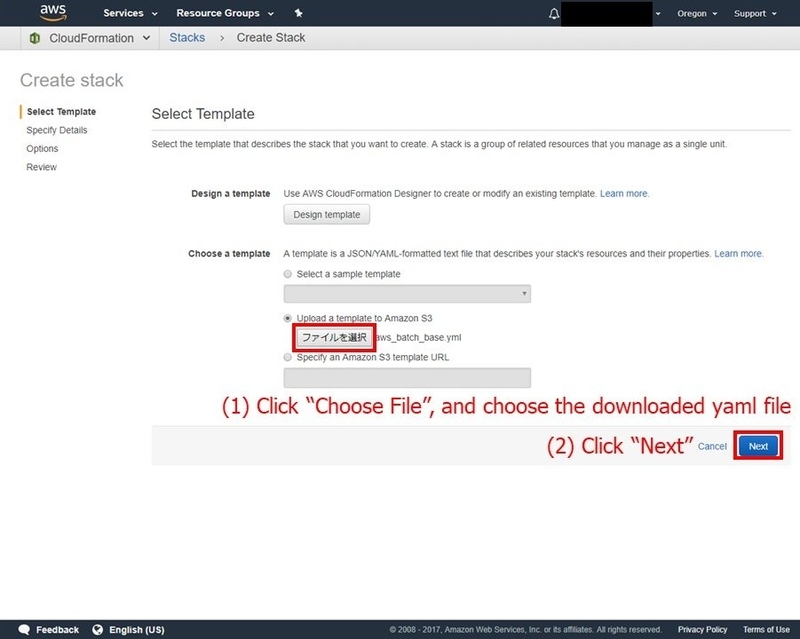

Please download a CloudFormation template file.

Environment creation with CloudFormation

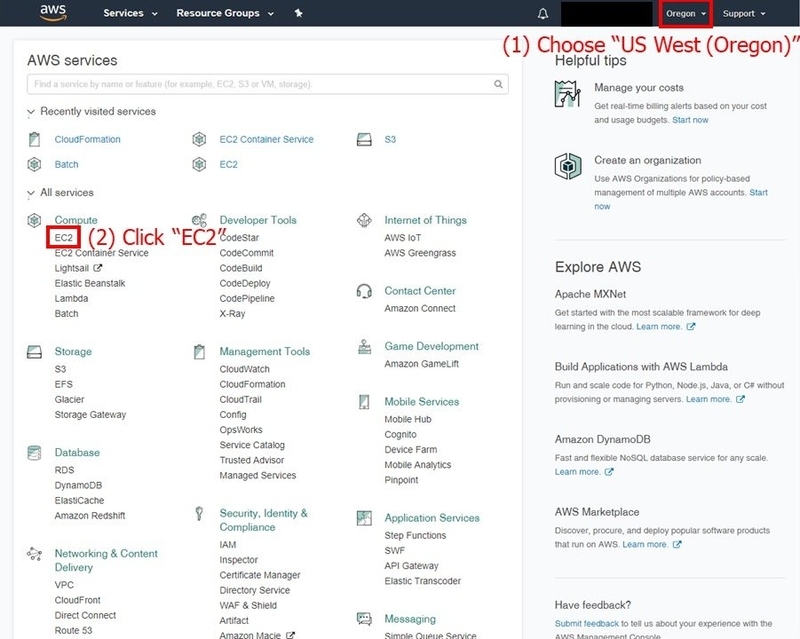

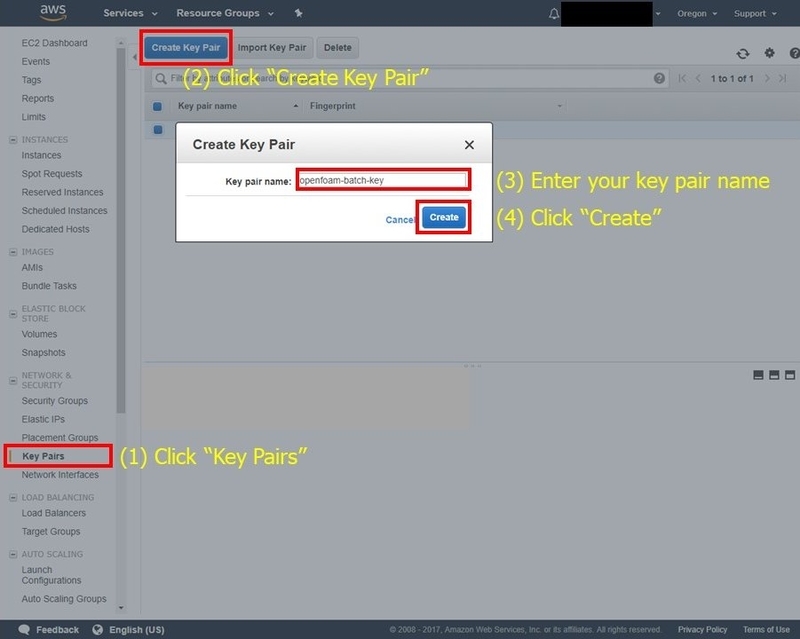

Login to AWS management console, and choose "US West(Oregon)" region. Click "EC2" to careate a key pair for SSH connection.

Click "Key Pairs" and "Create Key Pair", input a name of the key pair to create, and click "Create". Save the secret key file (.pem) from the download window. Take care not to lose or leak the key.

Click "Key Pairs" and "Create Key Pair", input a name of the key pair to create, and click "Create". Save the secret key file (.pem) from the download window. Take care not to lose or leak the key.

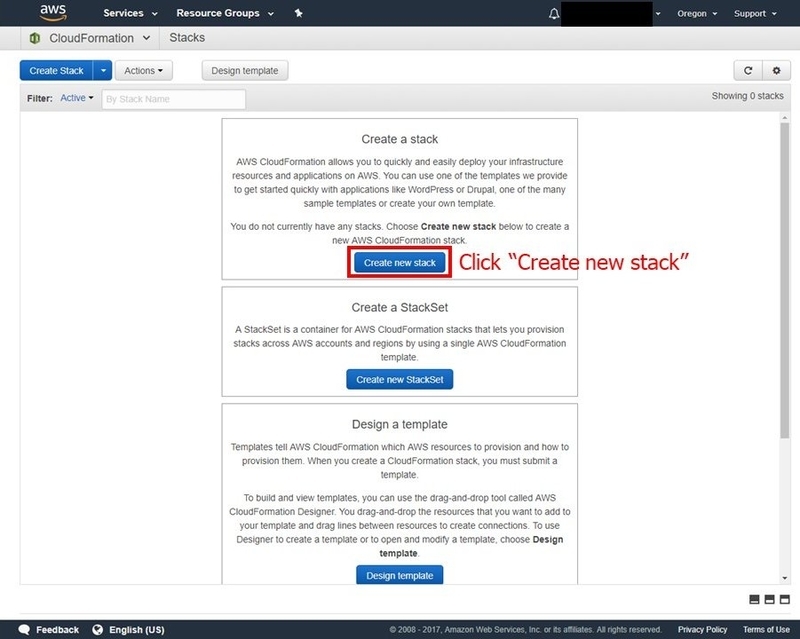

Go to the home of the management console, and click "CloudFormation" to create the environment. If this is your first time to use CloudFormation, the following page will appear. Then choose "Create New Stack".

Go to the home of the management console, and click "CloudFormation" to create the environment. If this is your first time to use CloudFormation, the following page will appear. Then choose "Create New Stack".

Press "Choose File", and choose the CloudFormation template file you downloaded. After that click "Next".

Press "Choose File", and choose the CloudFormation template file you downloaded. After that click "Next".

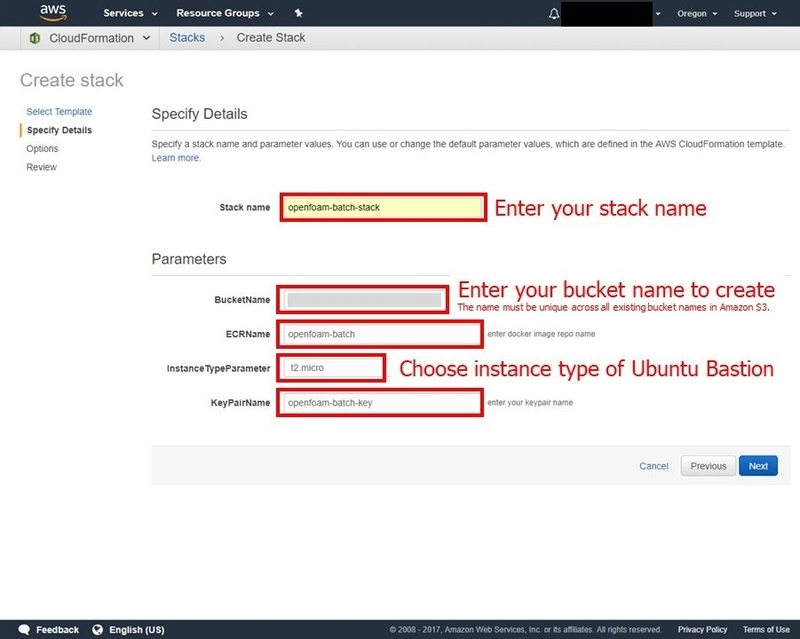

Input each setting items like follownig.

Input each setting items like follownig.

| Item name | Detail |

|---|---|

| Stack name | Name of the CloudFormation stack. Ex) openfoam-batch-stack |

| BucketName | Name of the S3 bucket to save results of flow simulation. Globally unique name should be given. Ex) [AWS Account ID]-openfoam-batch-bucket |

| ECRName | Name of ECR(EC2 Container Registry) to manage Docker containers. Ex) openfoam-batch |

| InstanceTypeParameter | Instance type for Ubuntu Bastion. t2.micro is enough to complete this procedure |

| KeyPairName | Key pair used for SSH connection to Ubuntu Bastion. Input the name of the key pair (without extension) you created. |

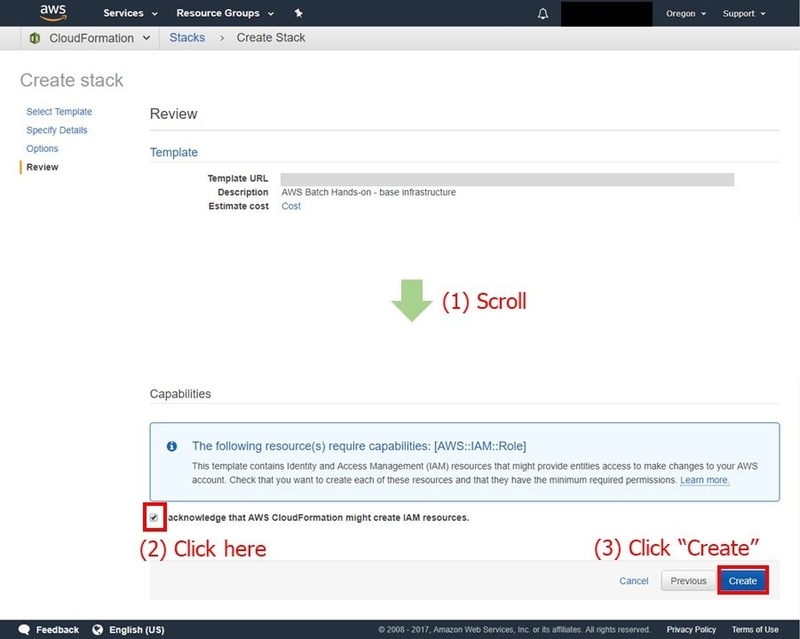

Scroll to the bottom of the page, check the box, and click "Create".

Scroll to the bottom of the page, check the box, and click "Create".

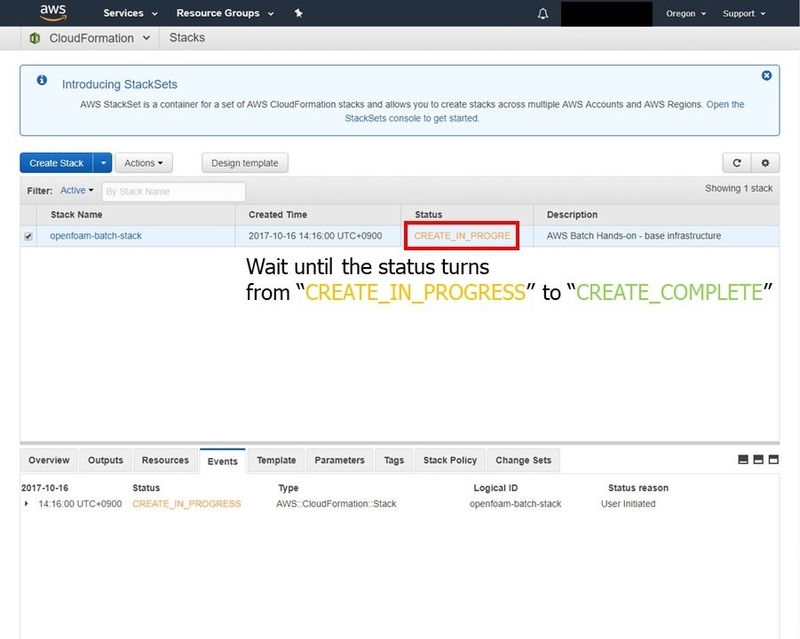

Now CloudFormation start creating the environment automatically. Ubuntu Bastion will be launched, and this repository on GitHub will be cloned. Wait until the status of the stack turns to CREATE_COMPLETE on the management console.

Now CloudFormation start creating the environment automatically. Ubuntu Bastion will be launched, and this repository on GitHub will be cloned. Wait until the status of the stack turns to CREATE_COMPLETE on the management console.

Configuration of AWS Batch and Job submission

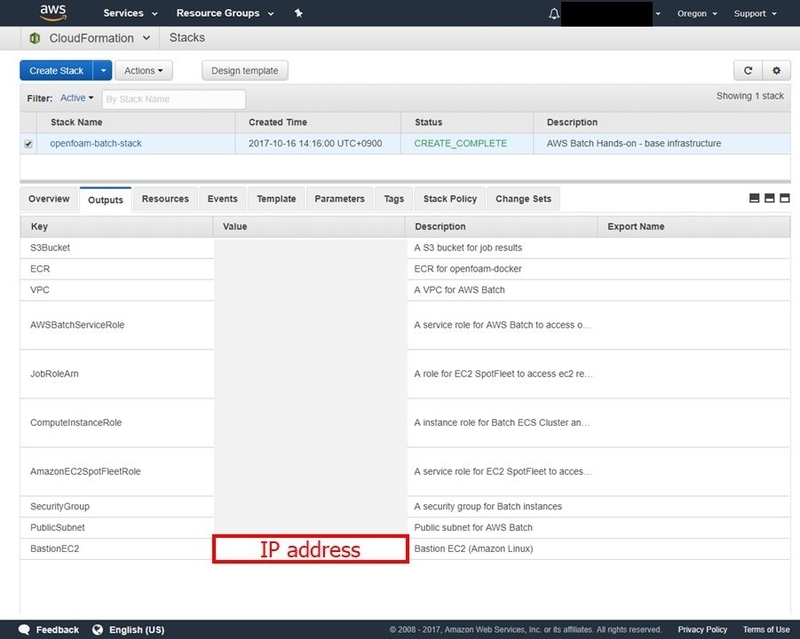

Connect to Ubuntu Bastion with SSH. Target IP address is shown in Outputs tab of CloudFormation.

Confirm AWS CLI and Docker are installed.

Confirm AWS CLI and Docker are installed.

$ aws --version

aws-cli/1.11.170 Python/2.7.12 Linux/4.4.0-1020-aws botocore/1.7.28

$ docker --version

Docker version 17.09.0-ce, build afdb6d4

Confirm files are cloned from GitHub.

$ cd /home/ubuntu/openfoam-docker

$ ll

total 64

drwxr-xr-x 3 root root 4096 Oct 16 05:18 ./

drwxr-xr-x 5 ubuntu ubuntu 4096 Oct 16 05:32 ../

-rwxr-xr-x 1 root root 8203 Oct 16 05:18 aws_batch_base.yml*

-rwxr-xr-x 1 root root 831 Oct 16 05:18 bastion_setup.sh*

-rwxr-xr-x 1 root root 1022 Oct 16 05:18 commands.sh*

-rwxr-xr-x 1 root root 608 Oct 16 05:18 computing_env.json*

-rwxr-xr-x 1 root root 1185 Oct 16 05:18 Dockerfile*

drwxr-xr-x 8 root root 4096 Oct 16 05:18 .git/

-rwxr-xr-x 1 root root 499 Oct 16 05:18 job_definition.json*

-rwxr-xr-x 1 root root 187 Oct 16 05:18 job_queue.json*

-rwxr-xr-x 1 root root 601 Oct 16 05:18 openfoam_run.sh*

-rwxr-xr-x 1 root root 1328 Oct 16 05:18 README.md*

-rwxr-xr-x 1 root root 1357 Oct 16 05:18 submit_batch.py*

-rwxr-xr-x 1 root root 1290 Oct 16 05:18 U_template*

Open openfoam_run.sh, and edit BUCKETNAME.

$ sudo vi openfoam_run.sh

Build the Docker container.

Build the Docker container.

$ sudo docker build -t openfoam-batch:latest .

Let's save the container on ECR. First, get the login command with the following command.

$ aws --region us-west-2 ecr get-login --no-include-email

A long login command will be returned, then add sudo at the top and execute the command. "Login Succeeded" is returned if successfully logged in.

Add a tag to the container, and push to ECR.

$ sudo docker tag openfoam-batch:latest [YourAwsAccountID].dkr.ecr.us-west-2.amazonaws.com/openfoam-batch:latest

$ sudo docker push [YourAwsAccountID].dkr.ecr.us-west-2.amazonaws.com/openfoam-batch:latest

From here we configure AWS Batch and submit jobs. Configuration for AWS Batch is described on job_definition.json, computing_env.json, and job_queue.json.

Firstly, edit job_definition.json.

$ sudo vi job_definition.json

Next, edit computing_env.json.

Next, edit computing_env.json.

$ sudo vi computing_env.json

You do not have to edit job_queue.json.

You do not have to edit job_queue.json.

Create Job Definition, Compute Environment, and Job Queue with the following three AWS CLI commands, respectively.

$ aws --region us-west-2 batch register-job-definition --cli-input-json file://job_definition.json

$ aws --region us-west-2 batch create-compute-environment --cli-input-json file://computing_env.json

$ aws --region us-west-2 batch create-job-queue --cli-input-json file://job_queue.json

At the end, execute the script "submit_batch.py". Comments like "Submitted JOB_00001 as ..." will be returned.

$ python submit_batch.py

Submitted JOB_00001 as [Job ID]

Submitted JOB_00002 as [Job ID]

Submitted JOB_00003 as [Job ID]

On the dashboard of AWS Batch, transition of the job state can be seen.

On the list of the EC2 instances, you can confirm the instances are terminated after all jobs are finished.

On the list of the EC2 instances, you can confirm the instances are terminated after all jobs are finished.

Deletion of resources

If you do not use the environment for some days, and have a plan to use the environment again, I recommend to stop the Ubuntu Bastion.

In case you will never user the environment again, please delete the resources by the following procedure.

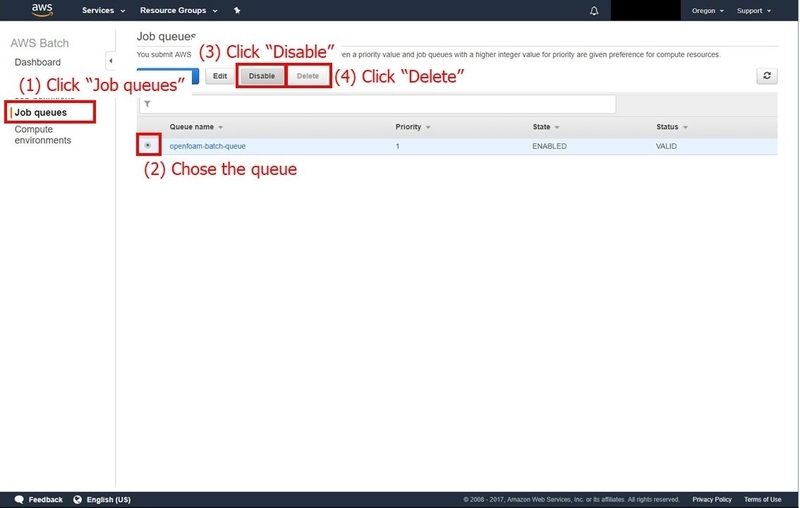

First, disable and delete the Job Queue on the console of AWS Batch.

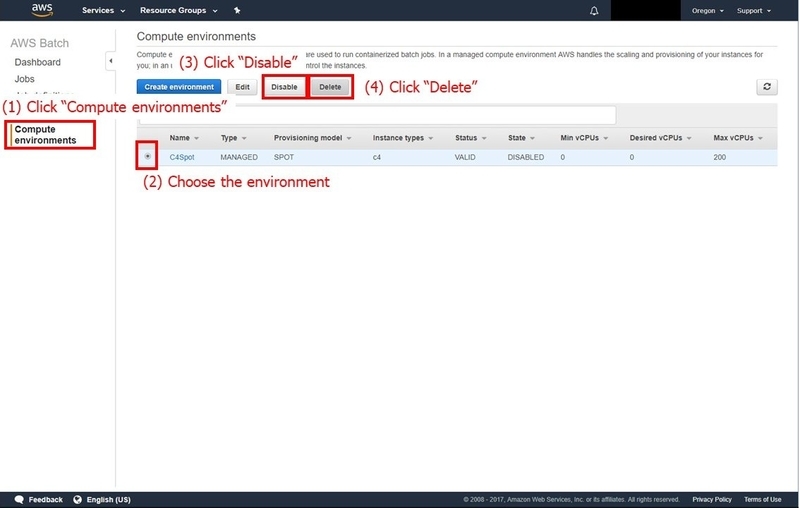

Next, disable and delete the Compute Environment.

Next, disable and delete the Compute Environment.

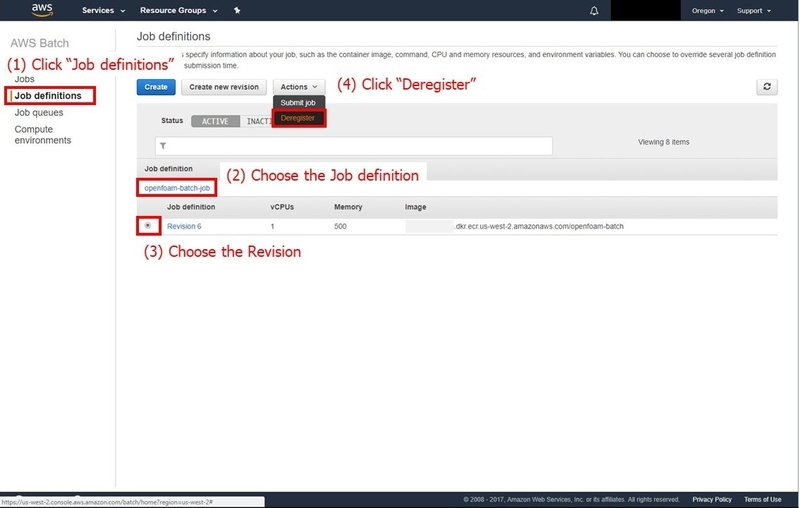

Deregister the Job Definition.

Deregister the Job Definition.

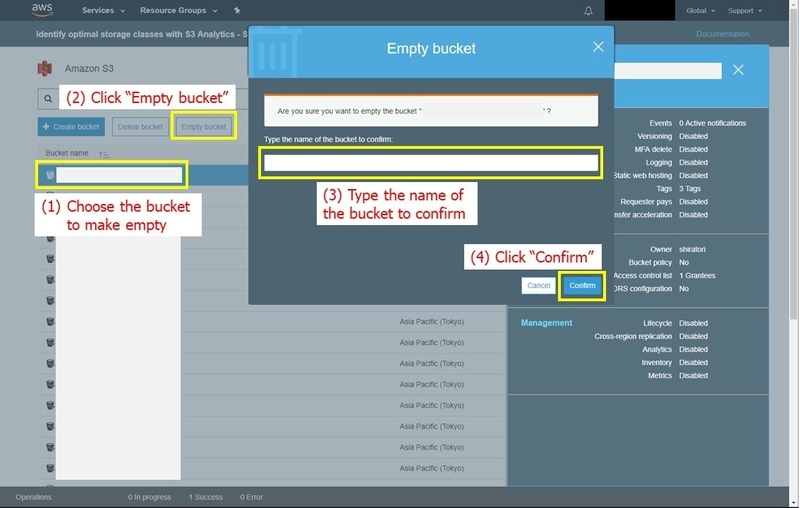

Delete all of the files in the S3 bucket. Otherwise, the stack deletion, at the end of this procedure, will be failed.

Delete all of the files in the S3 bucket. Otherwise, the stack deletion, at the end of this procedure, will be failed.

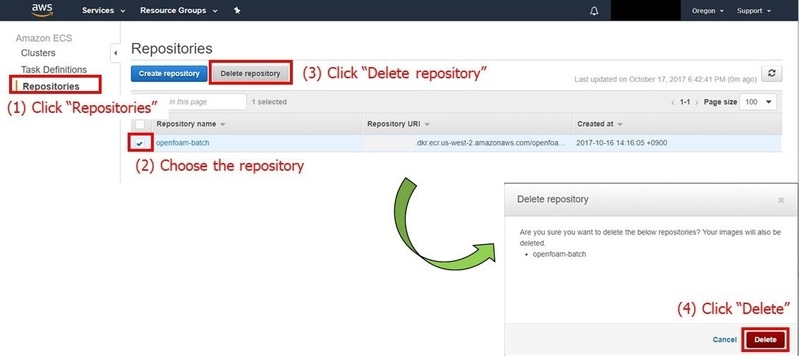

Delete the ECS repository.

Delete the ECS repository.

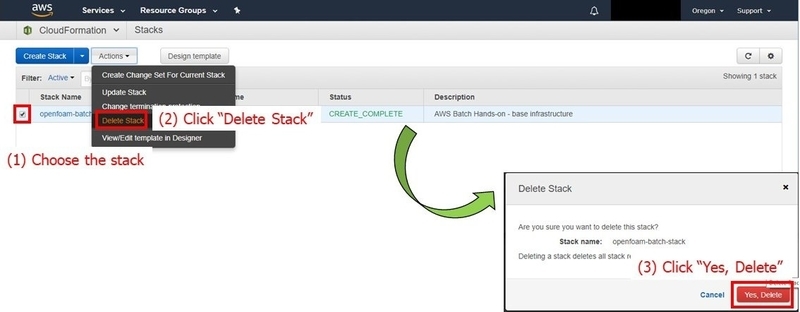

Finally, delete the stack of CloudFormation. By doing this, Ubuntu Bastion and the S3 bucket is deleted.

Finally, delete the stack of CloudFormation. By doing this, Ubuntu Bastion and the S3 bucket is deleted.

Cost

Let's estimate the cost for the procedure above. As a result, it costs about 1 USD.

In this procedure, the Compute Environment is configured to scale out up to 200 vCPUs (described in computing_env.json). When I tried, EC2 instances are launched as the following table shows.

| Instance Type | (A) vCPU/inst. | (B) # of launched inst. | (A)×(B) |

|---|---|---|---|

| c4.8xlarge | 36 | 5 | 180 |

| c4.4xlarge | 16 | 1 | 16 |

| c4.xlarge | 4 | 1 | 4 |

| Total | 200 |

The cost for the instances is calculated as follows if they were on-demand instances.

| Instance Type | (B) # of launched inst. | (C) price per inst. per hour (on-demand) | (B)×(C) |

|---|---|---|---|

| c4.8xlarge | 5 | $1.591 | $7.955 |

| c4.4xlarge | 1 | $0.796 | $0.796 |

| c4.xlarge | 1 | $0.199 | $0.199 |

| Total | $8.95 |

In the procedure above, spot bid price is set as 40% of the on-demand price (configured in computing_env.json).

So even in the most expensive case, hourly cost is estimated as follows.

$8.95 × 0.4 = $3.58

The batch job was completed in 15 mins. Thus the computing cost is estimated as follows.

$3.58 × 15 / 60 = $0.895

This is the reason that I estimated the cost as about 1 USD.

Summary

In this article, batch jobs of OpenFOAM are executed with AWS Batch while sweeping the boundary condition.

The cost for the batch is also estimated.

Per-second billing for EC2 is beneficial in cases computing time is less than one hour.

Make your batch job cost-effective by combining per-second billing and spot instance.

Click here if you want to contact us.